My VCAP5-DCA Experience

Today is brilliant. I received my VCAP5-DCA results. I passed!!!

I’m very, very happy because the exam didn’t get the way I wanted to.

I went through some difficulties during the exam, beside the real hardiness of the exam itself.

First of all, the remote access to the Lab was horrible! It was so slow that switching between applications within a RDP session took several seconds. I’m not talking about 3 or 4 seconds, but more like 20… this brought a lot of pressure on my shoulders as the time literally runs.

Secondly, you will receive few paper sheets during the exam. Ask for extra ones! The tasks you have to go through give you a lot of information. Write down the important points, like that you won’t have to switch several times between the task description screen and the RDP sessions screen. And if the remote session is poor, it will spare you extra time.

Thirdly, if you have a task, or a part of a task that you don’t know, note it and go to the next one. Of course you will have access to the whole documentation, but if you don’t know what you are looking for and where in documentation you might find it, simply forget it! You don’t have a lot of time to go through the whole tasks. Don’t waste your time finding information you don’t know about. You might lose too much time on a single task, and being out of time for other tasks that you could have handled. Like I said. A point you don’t know about, skip it!

Besides that, the DCA is really tough. It challenges your capacity for administering and troubleshooting a virtual infrastructure.

To get prepared, I spent around 40 hours studying. I used several resources like the vBrownBag and the 3 following sites that are a gold mine of information:

http://paulgrevink.wordpress.com

This exam was a thrilling experience for me. Before, during and at the end when I received the result.

I sincerely want to thank @egrigson (http://www.vexperienced.co.uk); @paulgrevink (http://paulgrevink.wordpress.com); @jaslanger (http://www.virtuallanger.com) and of course @cody_bunch (http://professionalvmware.com/brownbags)

And finally, my old friend @ErikBussink who helped out during my preparation.

Without you guys it would have been a much, much more difficult to pass this one. Thank you again.

Adding/Removing Virtual Machines’ PortGroups for Standard Switch at cluster level with PowerCLI

Starting with vSphere 4, a nice network enhancement has been brought:

Virtual Distributed Switchs (vDS)

Except for the specifics features bound to the vDS, it is nice to set only one time a new VMs’ PortGroup for an entire group of hosts. Of course you have pro and cons between vDS and Standard Switchs. For me, one big disadvantage of the vDS is that you must have an Enterprise Plus or a vSphere for Desktop licence to use it with your hosts.

But I will only stick to the centralized “add and remove” of VMs PortGroups.

It is true that when I must add or remove a PortGroup in a cluster with 20 hosts might take some time if you don’t use a vDS. And I have several clusters like that. No money for Enterprise Plus 😦

So I asked some help from my pal –> PowerCLI

I made a handy script to add a new VMs’ PortGroup in Standard Switch to every host in a cluster. And of course I made one to remove PortGroup from hosts in a cluster.

This script has been successfully tests against vSphere versions: 4.x and 5.x

To add a PortGroup:

“Add_PortGroup_at_Cluster_Level” -> Download-Script

In my lab I have a Cluster with only 1 PortGroup configured for Virtual Machines.

In this script you have to change the credential used to connect to the vCenter server, set the correct vCenter address and set the DataCenter name. By default it will add the PortGroup to the default Swith: “vSwitch0”. You can change it if you need. The only settings you can specify during the addition are the PortGroup’s Name and its VLAN. The other settings are by default.

Once the script launched and authenticated, you will have a list box containing every cluster present in the Data Center:

Select the cluster in which you want to add the PortGroup

Enter a name for your new PortGroup

The script will check if a PortGroup with the same name already exists and exit if so.

Then you specify a VLAN number between 0 and 4095.

The PortGroup will be added to the hosts:

This will be reflected at the cluster level:

And at the host level

To remove a PortGroup:

“Remove_PortGroup_at_Cluster_Level” -> Download-Script

In my lab I have a Cluster with 2 PortGroups configured for Virtual Machines.

Like the other script you have to change the credential used to connect to the vCenter server, set the correct vCenter address and set the DataCenter name.

The only setting you can specify during the removal is the PortGroup’s Name.

Once the script launched and authenticated, you will have a list box containing every cluster present in the Data Center:

Select the cluster in which you want to remove the PortGroup

Enter a name of the PortGroup you want to remove

At this point, the script checks if no VMs are connected to the PortGroup. Otherwise it warns you and stops:

If no VMs are connected, the PortGroup is removed from the hosts:

This will be reflected at the cluster level:

Disabling Enabling VAAI on vSphere with PowerCLI

vStorage APIs for Array Integration (VAAI) is a very nice feature, offloading hosts for several storage operations. Honestly I did test these features and was highly impressed by the results.

Here a small example of a sVmotion for a VM having a vmdk of 35GB Lazy Zeroed:

No VAAI it took 02:50 to complete and almost 500MB/s bandwidth between the host and the storage

With VAAI it took 02:18 to complete and almost no bandwidth between the host and the storage

For these tests I performed, I needed to turn on and off the VAAI at the host level. VMware has a very good KB to disable VAAI.

The only way that is missing from the KB -> PowerCLI

So I did a small script to turn on and off the VAAI at the host level. I tested it on vSphere 4.1 and 5.0.

In my script you can manually enter the hosts or simply specify a whole group by using for example: get-vmhost -location (get-vmcluster %clustername%)

It checks if the host is in maintenance mode to avoid any risks when performing the actions. If you don’t want to set the maintenance mode, the script ends.

After having modified you host, I recommend to reboot. Normally it is unnecessary, but I’ve been confronted to situation where some of the features weren’t working correctly after having turned on the VAAI. A reboot corrected it.

vmware KB 2014323 PowerCLI to set qla2xxx option at cluster level

I’ve been facing very recently a naughty issue when I updated my vSphere 4.1u2 FCoE hosts to vSphere 5.0u1.

Right after the migration to vSphere 5, majors’ performance issues appeared with the underling storage. I was facing read/write latencies of several thousand milliseconds which lead to dead path detection and of course I/O problem within the VMs.

The servers where working perfectly with vSphere 4.1, so it has been decided to quickly rollback from 5.0u1 to 4.1u2.

After having restored a proper virtualisation service I opened SR at VMware who asked me to open an SR at the storage array vendor, who asked me to open a SR at the FC/FCoE switch vendor. 3 SR for 1 problem L

The servers are Dell PowerEdge R715 with QLogic 8150 CNA cards.

After several diag files uploads, patches at the array level and further researches one of the support team (not VMware’s) came with a very interesting KB from VMware website -> I/O activity pauses on virtual machines with QLogic 81xx series CNA cards on ESXi 5.0

This KB sounded good to my ears. The workaround mentioned in this KB has been tested on a single host with the desired result -> no more latency or dead path.

So it has been decided to apply the workaround on every FCoE hosts.

The KB gives you the way to apply the workaround with esxcli. If you have a vMA and not too many hosts it is fine, but I wanted to be use a more “industrial” way. So I used PowerCLI.

My script below is designed to be applied at a cluster level for hosts running vSphere 5, having vmhba model “ISP81xx-based 10 GbE FCoE to PCI Express CNA” and using the driver “qla2xxx”. It will only run on hosts in maintenance mode and will ask for a reboot after its completion.

The model name mentioned above is the one returned by the cmdlet “Get-VMHostHba” for Qlogic 8150/8152 CNA cards. If you are using other model impacted by this issue as described in the Qlogic website, maybe check if it is the same model name. I’ve set a print mode for these info in the script.

It helped me a lot.

Eric

Start-Script->

## options dedicated to Qlogic CNAs and vSphere 5 issue -> VMware kb: http://kb.vmware.com/kb/2014323

## build 1.00

## Eric Krejci

## ekrejci.wordpress.com

## Twitter – @ekrejci

## on error stop the script

$erroractionpreference=“Stop“

$Username=“DOMAIN\USERNAME“

$Password=read-host“Enter Password“-assecurestring

### declare your vcenter

$vCenterserver=“vcenter.fqdn“

### declare the name of the target DataCenter

$TargetDCName=“DC-NAME“

### declare the name of the target Cluster

$TargetClusterName=“Cluster-Name“

### create an empty array to store the modified hosts

$AppliedESXs= @()

### connect to the vCenter

Connect-VIServer-Server$vCenterserver-user$Username-Password ([Runtime.InteropServices.Marshal]::PtrToStringAuto([Runtime.InteropServices.Marshal]::SecureStringToBSTR($password)))

## retreive the vSphere in the target cluster with powerstate -> PoweredOn AND vSphere version equal or larger to 5

$TargetESXs=get-vmhost-Location (Get-Cluster-Name$TargetClusterName-Location (Get-Datacenter-Name$TargetDCName )) | where {($_.powerstate -eq“PoweredOn“) -and ($_.Version -ge“5“)}

foreach ($TargetESXin$TargetESXs ) {

$TargetESX.name

## we check if the host is in maintenance mode to avoid any risks when performing the actions. if you don’t want to set the maintenance mode, the script ends.

if ($TargetESX.ConnectionState-eq“Maintenance“) {

$TargetESX.name+“ is already in maintenance mode“

} else {

if (“Y“-eq ((Read-Host“Your ESX is not in maintenance! Do you want to set now? Enter Y or N“).ToUpper())) {

Set-VMHost-VMHost$TargetESX-StateMaintenance

} else {

“exit“

exit

}

}

## uncomment the following to print the name, driver and model of the current vSphere’s VMHBAs

#Get-VMHostHba -VMHost $TargetESX | %{“Name: ” + $_.name + “; driver: ” + $_.driver + “; Model: ” +$_.model}

## retreive every VMHBA of the server with model “*10 GbE FCoE to PCI Express CNA*” and using the driver “qla2xxx”

$TargetESXHBAs=Get-VMHostHba-VMHost$TargetESX | where {($_.driver -eq“qla2xxx“) -and ($_.model -like“*10 GbE FCoE to PCI Express CNA*“) }

## if we have corresponding Qlogic CNAs then we apply the option described in the VMware KB.

if ($TargetESXHBAs-ne$null ) {

## getting the module “qla2xxx” from the current vSphere

$qla2xxx=Get-VMHostModule-VMHost$TargetESX-Name“qla2xxx“

## setting the module “qla2xxx” with the good option

$qla2xxx | Set-VMHostModule-Options“ql2xenablemsix=0“

$AppliedESXs+=$TargetESX

}

}

## you must reboot to have the setting applied. if you don’t want to do it now, don’t forget to reboot the hosts later.

if (“Y“-eq ((Read-Host“Do you want to restart the ESX Servers? Enter Y or N“).ToUpper())) {

“Rebooting the ESX Server“

foreach ($AppliedESXin$AppliedESXs ) {

$AppliedESXView=Get-View-Id$AppliedESX.id

$AppliedESXView.RebootHost($false)

“End of configure ESX server “+$AppliedESX

}

}

“Finished“

Disconnect-VIServer-Confirm:$false

<-End-Script

Update vSphere through VMware Update Manager with PowerCLI

I’m always amazed of what you can do with PowerCLI.

Actually I’m having version 4.1 of vCenter/vSphere

I’m using complete PowerCLI script to configure freshly installed vSphere. I was configuring everything but the last patches.

VMware released for some times already a VMware Update Manager PowerCLI. It needs to be installed in addition of the PowerCLI.

You can download the Update Manager PowerCLI installer package from the product landing page at https://www.vmware.com/support/developer/ps-libs/vumps

Beware of downloading the same release than the one you are using on your infrastructure.

I tried the release 5.0 against my 4.1 infra and it didn’t work at all…

I won’t put my vSphere hosts’ configuration script in this post but I’ve made a dedicated one for VUM PowerCLI.

Okay, it does what you can already do with the vSphere client but it includes the necessary cmdlets to integrate them into any script you like.

I made this script:

vCenter_Update_vSphere_w_VUM_dyn_listbox

In the script you have to change the credential used to connect to the vCenter server, set the correct vCenter address. You can also specify the Datacenter in which you want to work with.

The first thing this script does is to verify that the VUM’s snapin is loaded. If it is not the case the script exits.

When you are ready to go, the script will give you the list of ever hosts which are either connected or in maintenance.

Once the host selected the script will ask if you want to download the last patches.

Then the host will be scanned. After the compliance status is verified. If no baseline is somehow attached to the host, the script exits.

Only the baselines of type “patch” which are not compliant with the hosts are taken into considerations and will be remediating.

As I said earlier this code can be easily integrated in a another script

StorageVmotion an entire datastore with PowerCLI

Storage vMotion is one of the most astonishing features that VMware brought to the universe of virtualization.

I’m certain that storage Admins will admit the migrating Data from on storage array to another can be quite complicated. On physical host it is almost impossible to do such migration without any downtime.

Well, Storage vMotion accomplish the full migration of VMs Virtual Disks without downtime! Some performance degradation during the migration but no downtime.

1 year ago we had to change the storage array used by my Virtual infrastructure (vSphere 4.0):

More than 60 hosts using 50 TB of disk space for ~500 VMs

I said. Ok let’s Storage vMotion them to the new array.

Even if, now you can start Storage vMotion through the VI Client. For more than 500 VMs it can take some times!

Ok let’s go with some scripting then.

PowerCli is the absolute solution for Virtual Admins. Honestly it is so powerful.

I made this script:

storage_vmotion_entire_datastore_with_reservation

In the script you have to change the credential used to connect to the vCenter server, set the correct vCenter address and set a percentage of reservation in the destination Datastore. This % reservation will avoid moving a VMs real usage if it is above the reservation + destination Datastore’s free space.

Basically you enter the Source Datastore name and Destination Datastore name.

Then it will get the VMs located in the source Datastore.

And for each VM it will first check if the UsedSpaceGB of the VM is greater or equal of the FreeSpace including the reservation specified in the command of the Dest. Datastore. If it is above it exits.

Then it will check if the ProvisionedSpaceGB of the VM is greater or equal of the FreeSpace including the reservation specified in the command of the Dest. Datastore. If it is above it warns you and ask if you want to continue or exit.

Last check is if the VM has snaphots. If it has it will skip it and go for the next one.

Once the storage vMotion of the VM is complete, it will go for the next one, etc…

This script only does VMs without snapshots not the templates.

I used this script to migrate my entire infrastructure successfully without any issue and with the minimal amount of effort.

Piece of cake

MSSQL 2008 Backup compression

Compression of DBs backup is a nice feature that came with MSSQL 2008 and later.

It could be handy in some situation but of course, compression costs resources on the server.

I did some tests with a MSSQL server on which I restored DBs of vCenter and VMware Update Manager from a real production environment.

My MSSQL server has the following specs:

Is a VM = yes

OS = Windows 2008 R2

MSSQL = 2008 R2

CPU = 1

RAM = 3 GB

Local hard disk = 1x SATA 7200

Multi partition = OS, DB Data, DB Logs, Backup repository

User Databases = 2 in single recovery mode

For my first test, from the management studio, I’ve just set through a maintenance plan 1 single task of backup and no schedule planned. I will run it manually.

Let’s enter a name

Now we will add the necessary tasks

Drag and drop from the toolbox a

Edit it

Choose the type -> Full; the databases -> user DBs; don’t forget the extension “bak_full_usr_NC“; the verify; the subdir. For the compression I let the default because it is not active at the MSSQL server level.

Let’s save this maintenance plan.

Now I’m going to do another plan with compression enabled

Let’s enter a name

Now we will add the necessary tasks

Drag and drop from the toolbox a ![]()

Edit it

Choose the type -> Full; the databases -> user DBs; don’t forget the extension “bak_full_usr_WC“; the verify; the subdir. For the compression, choose “Compress Backup”.

Let’s save this maintenance plan.

First, run the maintenance without compression

The results:

Time to complete – 07 min 29 sec

vupdatemanager_DB_Backup – 682 MB ![]()

Mainly disk access read on the DB and Write on the backup device (50MB avg. total) and read on the backup device for verify phase (80 MB avg. Read). CPU average of 2% for sqlservr.exe

Now let’s run the maintenance with compression

The results:

Time to complete – 02 min 42 sec

vupdatemanager_DB_Backup – 89 MB ![]()

During the backup a high CPU Usage of sqlservr.exe an average of 50% with picks at 100% and important disk access (90MB avg. total).

The first observation is that backup compression drastically decreases backup time and its compression ratio is very good for vCenter and update manager DBs.

In the other hand the compression also increases the CPU usage and disk access of your server.

I would say that before enabling compression I would check the CPU activity of your server if it is suitable. Really use the non-pick usage of you server to run compressed backup. And of course try it on a “small” database first to see if it works fine and progressively compress new databases’ backup.

Of course compression works also for differential and transaction log backups.

If the compression ratio is very good, you might also change you backup strategy to daily full, instead of full + differential

For those who prefer the TSQL way simply add the option COMPRESSION to the BACKUP statement:

Ex:

BACKUP DATABASE [<DB-NAME>] TO DISK = N'<path to backup folder>\<backup device name>’ WITH NOFORMAT, NOINIT, NAME = N'<name of your DB backup>’, SKIP, REWIND, NOUNLOAD, COMPRESSION, STATS = 10

vCenter MSSQL DBs backup and maintenance

Sometimes, when you are a Virtualization’s specialist you might not be a DB specialist. Unfortunately vCenter uses DBs, meaning that you will have to deal with them one time or another.

Here I just wanted to give a little generic and easy to perform way for doing backup and maintenance for these DBs on MSSQL.

The following implementation works fine for a vCenter 4.1 or 5 infrastructure of 300 to 500 VMs.

Let’s agree on several points:

– vCenter 4.1 u2 already installed (preferred not already in production)

– MSSQL Standard 2008 R2 installed locally and used only for vCenter DBs with the SQL agent running

– The non-system databases will be saved once a day, kept for 7 days. The restore point in case of a disaster will be the last backup (recovery model to simple)

– We also want to take care of the system DBs

– We have 2 DBs. vCenter (vCenter_DB) and Update Manager (vupdatemanager_DB)

– The DBs will be saved locally on the server. The server is saved by tier backup software that saves it system files. It will save the backup of the DBs

– Backup compression is not enabled in this scenario. Check this post to figure out if you want/can enable it or not!

First let us take care of the system DBs that might be useful in case of a major crash of your server.

We will do all of this through the maintenance plans of MSSQL

On your vCenter’s MSSQL server launch the  and logon onto your SQL

and logon onto your SQL

Once inside, extend the Management and right click on Maintenance Plan to start a new Maintenance Plan Wizard. (I told you I would use the easy way, to start at least…)

Enter a name, description and let the default “single schedule”

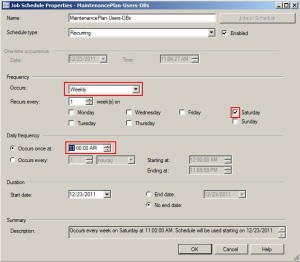

Click on change to actually set the schedule

We will let this maintenance run once a week. For this MSSQL used exclusively by vCenter it would be enough, of course other use of MSSQL would need to adapt this frequency

Select the following tasks

Personally I move the “Shrink” task right after the backup one and the “Clean History” task to the end.

Now let’s select the databases for every task

Select the System Databases

Do the same for the other tasks:

I will only do a rebuild task once a week. Here you can find a very good article on index rebuild/reorganize If you have an enterprise version of MSSQL you can rebuild them online.

Now on the backup task we still choose the system databases

We also create sub-dirs, verify the backup integrity and set the backup file extension to: bak_full_sys

select the system DBs for the shrink task.

To the maintenance cleanup task we will ask the plan to remove the backup files of the system databases older than 1 week. Of course you can set a higher retention period if you wish. Note that I specify the same file extension than the one set in the precedent step. Like that I won’t risk deleting other files than these

I let the Cleanup one to its defaults. This task clean the history for the backup, agent jobs and maintenance plan for the whole SQL server. It can be set only one time. More details here

Finally I want to have a detailed trace of the plan execution for troubleshooting purpose. I have a dedicated folder for this.

Click on finish and we are done.

Back in the studio you can now see 2 new elements:

Now just before doing the plan for the user databases I will check that the recovery model of my databases is set to simple. This means that the only point in time of restore is the last backup.

In the studio, extend the databases and right click -> properties on each of your user databases:

Go in the “options” page and select the recovery model to “Simple”

Do the same with the others.

Once the recovery model set let’s do the plan for the vCenter DBs. This one will be made in 2 steps.

First name and description

Then we set the schedule. In this step we will set the weekly one

Let’s choose the tasks. (we won’t select the clean Up History again because it is already present in the system’s DB plan)

Now like for the system plan we will choose the user databases

Like with the system DBs I will only do a rebuild task once a week. Here you can find a very good article on index rebuild/reorganize If you have an enterprise version of MSSQL you can rebuild them online.

We also create sub-dirs, verify the backup integrity and set the backup file extension to: bak_full_usr

we set the shrink task

To the maintenance cleanup task we will ask the plan to remove the backup files of the user databases older than 1 week. Of course you can set a higher retention period if you wish. Note that I specify the same file extension than the one set in the precedent step. Like that I won’t risk deleting other file than these

Finally I want to have a detailed trace of the plan execution for troubleshooting purpose. I have a dedicated folder for this.

Back to the studio we see the new plan. We are not finish yet. Let’s right click and modify it

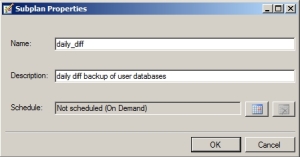

We will add a new subplan to make our daily backups for the user databases.

Click on

Let’s enter a name and description

And set a schedule (daily at 10:00 PM)

Now we will add the necessary tasks

Drag and drop from the toolbox a

Edit it

Choose the type -> Differential; the databases -> user DBs; don’t forget the extension “bak_diff_usr“; the verify and the subdir

We link the backup task to the maintenance one

And edit it

Let’s add again a  , link it to the previous task and edit it

, link it to the previous task and edit it

Now let’s select the type “Maintenance Plan Text Reports”, go to the output report folder. I personally use retention of 4 weeks because it gives a deeper history of what happened in case of troubleshooting.

before exiting, we will rename the default Subplan_1. double click on it and rename it to a more explicit name.

We are all set just save the plan.

If you go the SQL Server Agent -> Jobs; you will see the 2 jobs named after the subplan you have just added and renamed.

Now let’s try these.

First we will do the system ones:

Right click -> Start Job at Step on the job

Once complete you can see that on the backup repository new folders have been created

With backup devices inside

In the history repository you will also see the report

Now let’s do the same with the user databases Job.

Be careful to run the  first. It is on this one that the full backup is set and we want to have a full backup of the databases before running differential ones. Be also certain not to run this plan during the off peak hours of your vCenter. The plan will drain its performances when it runs the different tasks such as the index defrag.

first. It is on this one that the full backup is set and we want to have a full backup of the databases before running differential ones. Be also certain not to run this plan during the off peak hours of your vCenter. The plan will drain its performances when it runs the different tasks such as the index defrag.

Once complete you can see that on the backup repository new folders have been created

With backup devices inside

In the history repository you will also see the report

Now let’s just try the last one  to be certain it will run successfully.

to be certain it will run successfully.

If we go in the user databases repository we will see the new differential backup file

In the history repository you will also see the report

Voilà you have now maintenance not only for your vCenter databases but also for the system databases of your MSSQL.

I want to sincerely thank @ErikBussink for its comments and remarks about this post.

Fibre Channel Security

Honestly, I’m in the SAN for several years and I must admit that I’ve never taken into serious consideration the Fibre Channel Security.

I would say that I wrongly thought that it is secured by designed. with VSANs, WWN zonnings, not having every physical ports up on switches, etc…

I recently found some papers quite interresting and not that recent pointing out the risks on FC SANs and giving some “best practices” to securing it.

the first one is a chapter extracted from the following book “Securing Storage” ISBN 0321349954

the chapter, called “SAN: Fibre Channel Security” is only 30 pages long and expose the FC SAN security risks.

another one which comes from an organisation I wasn’t aware of their publications is “securing Fibre Channel Storage Area Network” from the … NSA

this one is an easy one. more like a “best practice” of 4 pages. short but interresting.

good reading.